Key Takeaways

Uptime monitoring is crucial for website success. Frequent downtime harms User Experience (UX), sales, and SEO rankings, impacting profits.

Tools like WP Engine Site Monitoring provide critical visibility. Alerts and performance insights help prevent revenue loss.

Plugins like Jetpack offer real-time site backups and downtime monitoring. Features like SMS alerts enhance monitoring capabilities.

Leaders should evaluate uptime monitoring solutions for proactive site management. It’s essential to choose tools that offer frequent checks and alerts.

Your website’s availability is (of course) a critical factor in its success. A website that experiences downtime too frequently may lose visitors, which can directly impact its earnings and your business’ profits. To prevent this, you need a reliable way to keep tabs on your site’s performance.

Fortunately, there are plugins and services available that monitor your website and alert you to any anomalies. You can then have a better idea of how your website is performing, and whether there are any problems you need to address.

In this post, we’ll introduce five of the top uptime monitoring solutions for your WordPress website. We’ll then provide a concise breakdown of each, including how they stack up. Let’s get started!

Key highlights

- Uptime monitoring consists of frequent checks on your website’s server and provides alerts if your site goes offline. This is crucial for a quick response to a site outage.

- Frequent downtime harms User Experience (UX), sales and revenue, and Search Engine Optimization (SEO) rankings, which is why it’s so important for businesses to have reliable uptime.

- The best tools for proactive uptime monitoring provide short check intervals, send alerts, and leverage multi-location verification to avoid false downtime reports.

- WP Engine Site Monitoring and other tools provide alerts, give visibility into site outages, uptime, and response time.

What Is uptime monitoring?

Uptime monitoring is a monitoring service that keeps tabs on whether your website is online. An uptime monitoring tool will check your website’s server at a regular frequency (determined by the service and your plan), and alert you when your website goes offline for any reason.

Why uptime monitoring is important

The frequency with which your website is offline (i.e. experiencing ‘downtime’) will have a major impact on your website’s (and business’) success. This is why it’s important to ascertain the current status of your website.

Frequent downtime can lead to several negative consequences, including:

- Negative impact on your search engine rankings

- Poor User Experience (UX), which can lead to decreased customer loyalty and a damaged brand reputation

- Loss of profits and income (including sales and ad revenue)

How does uptime monitoring work?

When you visit a website, a short ‘discussion’ occurs between your web browser and the site’s server. The site will then return a HTTP status code, which determines whether you can either gain access (online) or not (offline).

Uptime monitoring services do the same, though they ‘ping’ the server instead of visiting with a browser. The monitoring service then reads the HTTP code, and will alert you if downtime is detected.

What’s more, website monitoring services will typically use multiple locations to ping the server. This ensures there are no false alarms when you’re notified of downtime.

WordPress uptime monitoring plugins

There are a few features to look for in uptime monitoring plugins and software when considering your options. The more frequent the monitoring, the better. However, you also want to consider whether the plugin offers website performance reports, downtime alerts, and multiple location server checks.

Let’s look at some example solutions.

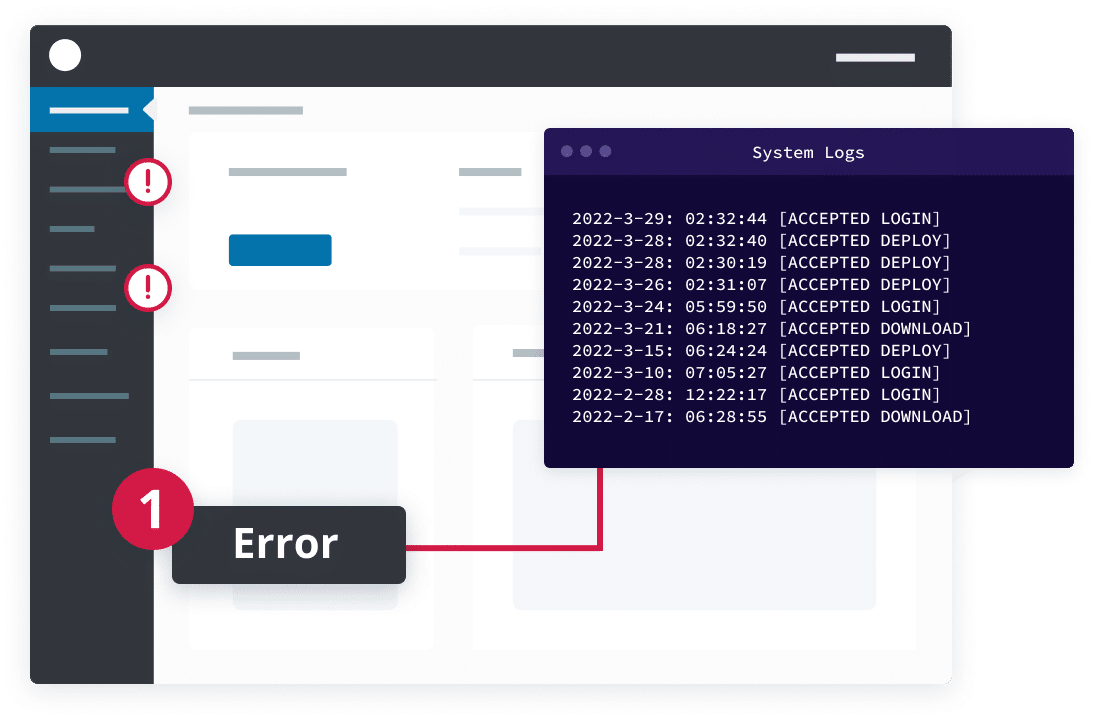

1. WP Engine Site Monitoring

Get the insights you need to keep your sites up and performing. WP Engine Site Monitoring alerts you to errors and provides critical visibility into outages, server uptime, and average response times across all of your websites, and includes the ability to link to site-specific access logs when an outage is detected.

Price: Starting at just $5

Pros: Site Monitoring helps you stay on top of site status and alerts you via email immediately when issues inevitably come up. Take action right away with detailed access logs to quickly resolve issues before they become more serious.

Cons: Site Monitoring is currently only available for sites hosted on WP Engine’s platform.

2. ManageWP Worker

ManageWP Worker is a WordPress management plugin that includes cloud backups, automated updates, client reports, and performance and security checks. The plugin also offers pro add-ons, including Advanced Client Report and Uptime Monitor.

Price: The Lite plugin is free, but uptime website monitoring requires you to purchase the add-on for $1 per website per month.

Pros: You get instantaneous downtime alerts (both via email and SMS), plus the ability to manage an almost unlimited number of websites.

Cons: The Uptime Monitor is a paid feature only.

3. Jetpack

Jetpack is a multi-module design, marketing, and security plugin created by Automattic, the developers of WordPress. Most pertinently, it includes real-time site backups (branded as VaultPress) and downtime monitoring, among a number of other useful features.

Price: The base plugin is free (which includes downtime monitoring), but you can upgrade to the Pro version starting at $3.50 per month, which (of course) gives you more features to play with.

Pros: Frequent server checks, and instantaneous downtime notifications via email and SMS.

Cons: Downtime monitoring is just one module of many, so it isn’t as feature-intensive as other solutions.

4. Uptime Robot

Uptime Robot is an online uptime monitor. The software enables you to integrate its API into your website, then receive frequent server monitoring updates. You also have access to downtime statistics (either two months or 12 months of logs).

Price: The free account includes five-minute interval monitoring, but you can upgrade to a premium version with one-minute interval monitoring starting at $4.50 per month.

Pros: Multiple website checks, frequent server checks, and server uptime and downtime statistics.

Cons: Unfortunately, SMS alerts are limited.

5. Internet Vista

Internet Vista is another online uptime monitor, that includes cloud-based monitoring tool, along with its own REST API (handy for developers) There’s also top-notch customer support on-hand to help you if you get stuck.

Price: Plans start at $2.48 per month, with varying uptime checks from one minute (on the pricier end) to 60 minutes.

Pros: Website performance reports, real-time alerts, and great customer support.

Cons: The software has pricier monitoring plans than other solutions, and we’d like to see more flexibility between monitoring intervals.

6. Super Monitoring

Offering both a WordPress plugin and Google Analytics integration, Super Monitoring offers minute-by-minute server monitoring. The software uses multiple location checks to ensure no false alarms are raised, and provides real-time notifications.

Price: Plans start at $5.99 per month, though you may have to purchase additional SMS credits.

Pros: One-minute interval monitoring, as well as real-time notifications (email and SMS) and multiple location server checks.

Cons: Plans are on the pricier side, and the additional cost of SMS alerts mean you may not always be kept up-to-date on downtime.

WordPress uptime monitoring plugins overview

As reacting to downtimes is crucial for website success, it’s important to select the right plugin for your needs. Here’s a more concise look at the solutions we’ve mentioned, as ranked by us.

A-Grade

- Uptime Robot is one of the fuller-featured uptime monitoring solutions on our list. The free plan offers five-minute monitoring intervals, as well as both email and SMS alerts. You also have access to uptime/downtime statistics (two months for the free plan, and 12 months for premium ones). By upgrading, you have access to more frequent monitoring (every one minute).

- Super Monitoring is another non-plugin solution on our list, though there is no free plan available. Your server will be checked every minute from multiple locations to prevent false downtime alarms.

B-Grade

- ManageWP Worker has just about all you’d need to monitor your website’s server. This includes instantaneous downtime alerts via email and SMS, and you can also monitor an unlimited number of websites (depending on your plan). For an all-around management plugin, it’s a good solution.

- Jetpack is a multi-purpose plugin that provides solid website uptime monitoring features for WordPress users. The free plan provides you with the complete set of downtime monitoring features, including five-minute interval server checks and instantaneous alerts.

C-Grade

- Internet Vista is one of the pricier options with less frequent website downtime monitoring. Longer intervals may be okay for smaller websites, but even just a few minutes of unnoticed downtime can be disastrous for larger ones. Of course, you can pay for shorter intervals, but it comes at a cost.

With so much at stake, it’s vital you choose a WordPress hosting service with high reliability, wide website availability, and low downtime. That’s where WP Engine comes in. To learn more about WP Engine’s features and our offerings, take a look at our managed hosting plans.

FAQs

What is Uptime Monitoring and How Does It Work?

Uptime monitoring is a monitoring service that regularly checks to see if your website is online, and will alert you if website downtime is detected. To determine if your website is online, the uptime monitor ‘pings’ your server, which results in an HTTP status code response that will indicate if the site is online or offline. If the site is determined to be offline, a notification will be sent to you.

Why is Uptime Monitoring Important for Businesses?

Businesses must have reliable uptime or run the risk of negative consequences like poor User Experience (UX), loss of sales and revenue, and negative impacts to search engine rankings.

Uptime monitoring services help business owners quickly identify site downtime, allowing them to respond quickly to the problem and avoid the issues associated with downtime.

How Often Should You Monitor Uptime?

Uptime monitoring should be done frequently to ensure a quick resolution to site downtime. Five-minute interval checks are common amongst free plans, but one-minute intervals are typical for paid uptime monitoring plans.

What are the Common Causes of WordPress Downtime?

There are many common causes on WordPress downtime, including SSL certificate problems, host/server issues, plugin or theme conflicts, PHP database errors, traffic slikes and more.