Key Takeaways

Nearly three-quarters (72%) of digital agencies are actively adjusting their web development practices to better serve automated systems and machine consumers.

Improving backend data layers (32%) and giving content managers the tools to easily create clear AI-friendly structured content (37%) are the top priorities for modern development teams.

Dual-audience architecture requires strict, uncompromising adherence to schema markup and highly structured data formatting to ensure algorithmic comprehension.

Model Context Protocol (MCP) servers are rapidly emerging as a required standard for reliable data exposure to external artificial intelligence platforms.

A highly performant, API-first hosting platform with robust bot management is non-negotiable for safely handling the complex, high-compute technical queries generated by the agentic web.

Seventy-two percent of agencies have adjusted their web development practices to better serve automated systems. This according to WP Engine’s research report, The Next Wave: How AI is Changing the Digital Agency Model in Building Websites For Both Humans and Machines. This massive shift marks the definitive rise of the agentic web. The industry is moving rapidly past the era of the single-audience website. It is now transitioning into a new paradigm that demands a sophisticated, dual-layered approach to digital architecture.

Websites must now be engineered to feed clean, highly structured data to machine algorithms. At the same time, they must continue to connect with and engage human visitors. Bridging this gap between front-end usability and back-end machine readability requires specific technical steps, a deep understanding of new search paradigms, and a highly reliable API-first infrastructure.

This transformation applies to agencies of all sizes, fundamentally altering how technical value is delivered and monetized. Boutique web studios should recognize that preserving client search visibility helps their clients’ business continue growing in an AI-driven market. Agencies with dedicated engineering teams will protect margins by standardizing on new web architecture early by avoiding unnecessary tech debt and creating workflow efficiency early. And agencies with enterprise clients will retain their competitive edge when pursuing high-value clients and projects.

The new technical baseline for search and discovery

For years, agencies optimized content exclusively for keyword ranking and the user experience. Success was measured by human clicks generated from a traditional search engine results page and continued engagement on the site.

Today, traditional search engine optimization is evolving into Generative Engine Optimization (GEO). AI agents and large language models (LLMs) require specific data formatting. This allows them to accurately parse a business’s logic, service offerings, and underlying value. Without creating an architecture that serves both audiences, brands risk disappearing from search and not showing up in AI citations.

The evolution of GEO

Search Engine Land’s guide on Generative Engine Optimization explains this critical shift in the digital landscape. The publication emphasizes that clear, plain-language text, scannable formats, and authoritative citations are actively rewarded by modern automated systems. Content that relies on vague buzzwords or poor heading structures is entirely ignored by bots seeking concrete answers.

To navigate this, agencies must adapt their content templates to include upfront summaries and FAQ sections to capture featured snippets. For a boutique agency managing client expectations, this means shifting the monthly reporting conversation entirely.

Instead of merely showing a client their keyword rankings, agencies must assess how effectively AI agents understand the client’s brand. Additionally, agencies will need to track the sentiment and authority the AI agents are assigning the brand.

By structuring content to answer questions immediately, agencies ensure clients become the source of truth when machines scrape the web. This shift gives agencies an opportunity to transform themselves from an execution vendor into a long-term strategic partner.

Balancing machine relevance with human quality

While structuring data for machines is required for the agentic web, agencies must maintain extremely high-quality standards for human readers. Machine readability does not mean sacrificing the brand voice or publishing rigid, robotic copy. Adhering to quality standards, as outlined in Google’s content guidance, ensures machine-optimized text remains highly valuable to human users.

Over-optimizing or generating thin, unhelpful content will result in algorithmic demotions that actively harm the client’s business. For mid-sized agencies focused on margins, it’s tempting to use AI to mass-produce basic content to save time. However, this strategy creates significant long-term risk and compromises client trust.

The agentic web requires content that is engineered for extraction but written for human persuasion. Ensuring content is valuable avoids search penalties, a baseline requirement for long-term client retention and sustained agency growth.

Structuring the data layer

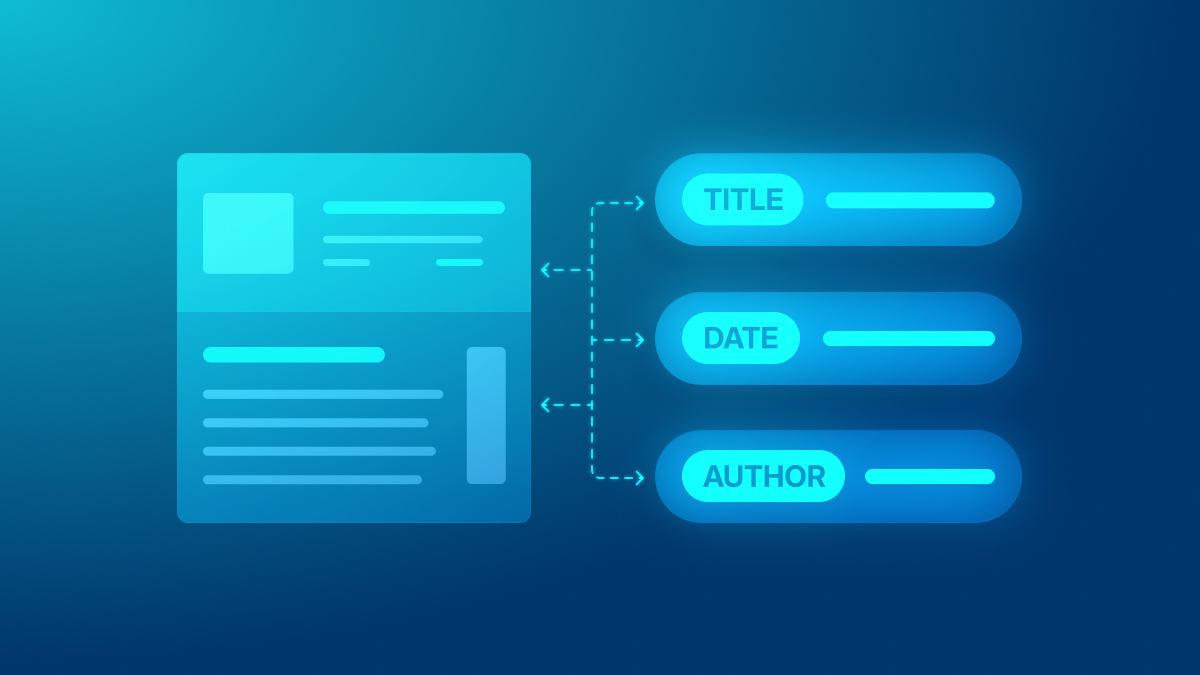

Optimizing for machines requires adherence to new technical baselines at the code level. Visual design alone is no longer sufficient to prove value to a modern client. A beautiful, highly interactive front end is entirely invisible to an AI agent if the underlying code lacks semantic meaning.

The necessity of consistent business information

According to WP Engine’s agency research report, 31% of agencies cite consistent business information as mandatory for their new builds. When automated systems pull client data, they must retrieve accurate, structured information regarding location, pricing, and operating hours without ambiguity. Strict schema markup and plain language architecture are absolute necessities.

Engineering teams should consult the official Schema.org documentation for implementing robust structured data protocols across every single page. For agencies managing multinational clients, inconsistent business information across hundreds of localized pages can completely fracture the brand’s AI visibility. Centralizing this data ensures that whether a human is reading a localized landing page or an AI agent is scraping the site via an API, the core business logic remains perfectly intact, secure, and unambiguous.

Implementing advanced schema markup

To execute this structured data seamlessly within a content management system (CMS), developers require professional, enterprise-grade tools. Executing strict data relationships is necessary to prevent algorithmic confusion and search visibility drops. Tools like Advanced Custom Fields (ACF), paired with Model Context Protocol (MCP) servers, allow developers to strictly define data relationships and create highly customized metadata layers that algorithms can easily parse.

This infrastructure prevents messy, unstructured data entry by content creators. It also ensures automated systems process the information on the back end exactly as intended. When an agency builds customized data fields, they empower the client to update the website without accidentally breaking the strict schema markup required by machine consumers. This separation of content creation from technical architecture is a massive operational advantage. It’s particularly valuable for agencies managing dozens or hundreds of client sites. It will help reduce support tickets, eliminate daily firefighting, and reclaim unbillable maintenance hours, while protecting their bottom line.

Exposing data with MCP servers

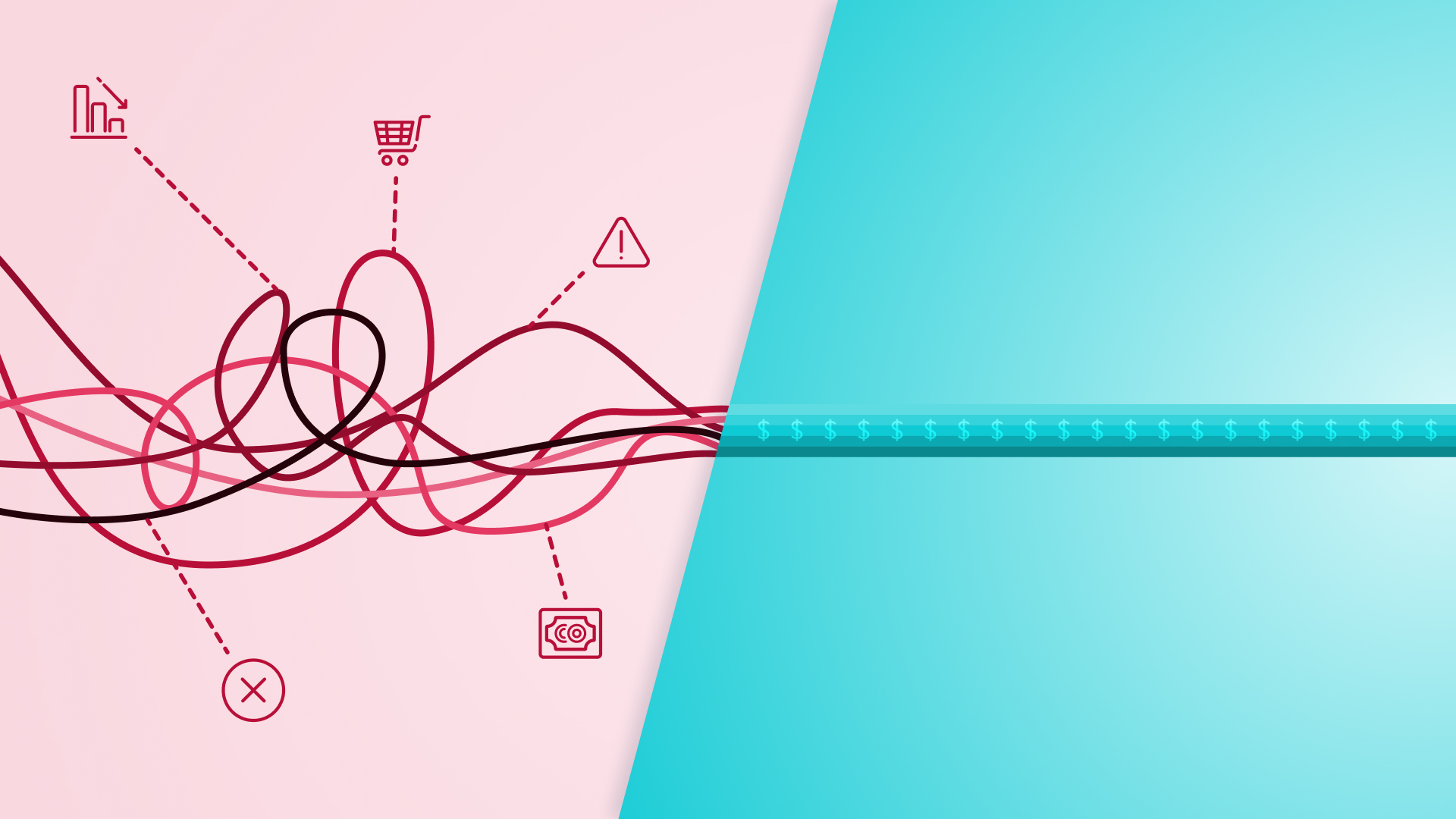

The technical frontier of the agentic web involves allowing external, automated agents to read CMS data quickly and easily. The industry is moving toward a web where AI agents operate directly on behalf of users. This will include fetching data, comparing products, and summarizing long-form content instantly. This complex data exchange is achieved using MCP and customized, secure APIs.

Bridging the engineering skills gap

Building these secure connections requires specific, modern engineering knowledge. Gartner’s finding that 80% of the engineering workforce will need to upskill shows agencies must prioritize training their teams to implement these advanced technical integrations. Falling behind on this training curve will directly impact an agency’s ability to win new business. As the tech evolves, any delay in focusing on upskilling will quickly snowball into an overwhelming challenge.

Firms that fail to upskill their engineers will find themselves unable to compete for enterprise contracts that demand modern dual-audience architecture. For technical directors and CTOs at larger agencies, this represents a significant shift in hiring, training, and operational structure.

Developers at agencies of all sizes need to move beyond building simple visual templates into roles as intelligence architects. These new architects will need to understand the deep nuances of machine-readable data transfer, API security protocols, and the high-compute capabilities required for edge readiness.

Standardizing external data connections

Using MCP and customized APIs for machine-readable data transfer establishes a standardized, reliable framework for the future. Developers can review Anthropic’s introduction to the Model Context Protocol to understand exactly how to build standardized, highly secure data connections between a client’s website and external LLMs.

For agencies building sophisticated digital experiences, utilizing WP Engine Headless WordPress®¹ establishes a decoupled architecture capable of securely delivering data via APIs to any machine consumer. Furthermore, implementing modern discovery tools like MCP WP Engine Smart Search ensures that websites’ conversational interfaces (like chatbots) are grounded in the live content from the site..

This combined architecture ensures that data flows efficiently without compromising client privacy, data ownership, or server stability. When an agency can guarantee that a client’s proprietary data is securely exposed to approved AI models without risking a massive server crash from automated bot scraping, they elevate their status from a basic service provider to an essential, irreplaceable technology partner.

Maintaining the human experience

In this new era, serving machines data shouldn’t degrade the human experience. While back-end data structuring feeds agents the context they require, the front end must remain highly accessible, intuitive, and conversion-focused for the actual people navigating the site.

Ensuring compliance and accessibility

Engineering teams must continue adhering to the W3C Web Accessibility Guidelines (WCAG) to ensure dual-architecture builds remain fully compliant for all human users. Clean semantic HTML, proper contrast ratios, and complete keyboard navigability are essential components of a modern website.

Interestingly, strict adherence to accessibility standards natively improves machine readability, proving that the two goals are deeply aligned rather than competing. An accessible website is, by definition, a highly structured website.

When an agency ensures that a screen reader can perfectly navigate a page’s heading hierarchy to assist a visually impaired user, they are simultaneously ensuring that an AI crawler can extract that exact same structural context. This overlap allows agencies to pitch accessibility not just as a strict legal compliance measure, but as a direct driver of AI search visibility and long-term brand equity.

Designing intelligent, user-friendly interfaces

Nielsen Norman Group’s research on AI tools and productivity gains highlights the absolute necessity of balancing machine logic with human usability. Intelligent websites with user-friendly interfaces, such as dynamic search bars, personalized content recommendations, or conversational workflows, benefit real-world users by helping them find answers faster and with less friction.

Balancing machine logic with an intuitive conversational user interface creates a seamless journey for the end consumer. The dual audience approach ultimately results in a faster, smarter, and more profitable digital experience for the client. By architecting the back end for machines, the front end becomes far more dynamic for humans. This results in higher engagement rates, improved client satisfaction, and significantly stronger long-term agency partnerships.

Next steps

Building for a dual audience protects a client’s digital presence in a rapidly shifting, highly volatile landscape. As an agency, you have a distinct opportunity to become the trusted advisor who guides your clients safely through this technological evolution. Agencies should recognize this as a massive competitive advantage. Helping insulate clients from the risks arising from algorithmic changes will translate directly to increased client retention and higher recurring revenue in this tumultuous time.

This is a job for both engineering and strategy teams. Prioritize offering AI readiness audits while having engineering teams evaluate and update recommended architectures for their legacy clients and future builds alike. Utilize MIT Sloan’s AI implementation strategies to guide your operational roadmap, ensuring your agency delivers the technical reliability essential to prevent client churn.

Transitioning your business model to offer continuous data structuring and architectural consulting provides the technical foundation required to grow your clients’ brands into the future and secure your position as a trusted advisor to their business.

Frequently asked questions

Does optimizing for machines hurt traditional human UX?

No, practices like clear heading structures, fast load times, and rigorous structured data formatting natively improve the human experience and overall site accessibility.

What is the most important first step for machine optimization?

Ensure all core business information, product pricing, and service descriptions are clearly defined using highly accurate schema markup and plain language content summaries across the entire digital property.